lifelib v0.3.1 (24 October 2021)#

This release adds a new example in the savings library,

and updates some formulas in the savings models.

To update lifelib, execute the command:

>>> pip install lifelib --upgrade

New Example#

An example is added to the savings library.

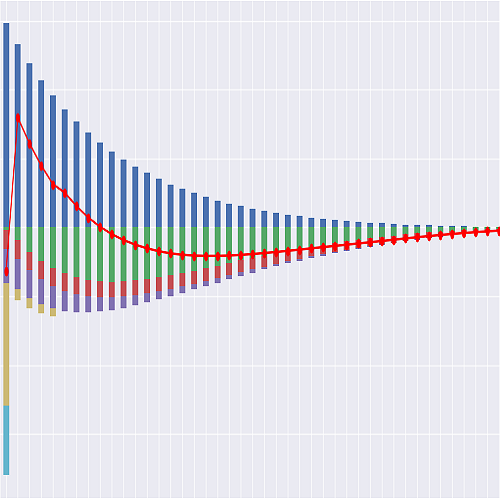

This example shows how to extend CashValue_ME

to a stochastic model,

by going through a classic exercise of calculating the time value of options and guarantees

on a plain variable annuity with GMAB by two methods,

the Black-Scholes-Merton formula and Monte Carlo simulation with risk-neutral scenarios.

This example consists of an example model, a Jupyter notebook

and some Python scripts.

The model is developed from CashValue_ME and named CashValue_ME_EX1.

The Python scripts included in savings are used to

draw graphs on the Gallery page

The Jupyter notebook describes the CashValue_ME_EX1 model,

explains the changes from the original model,

and outputs some graphs on the Gallery page.

File or Folder |

Description |

|---|---|

CashValue_ME_EX1 |

The example model for savings_example1.ipynb |

savings_example1.ipynb |

Jupyter notebook 1. Simple Stochastic Example |

plot_av_paths.py |

Python script for Account value paths |

plot_rand.py |

Python script for Account value distribution |

plot_option_value.py |

Python script for Monte Carlo vs Black-Scholes-Merton |

Fixes and Updates#

The following formulas are updated.